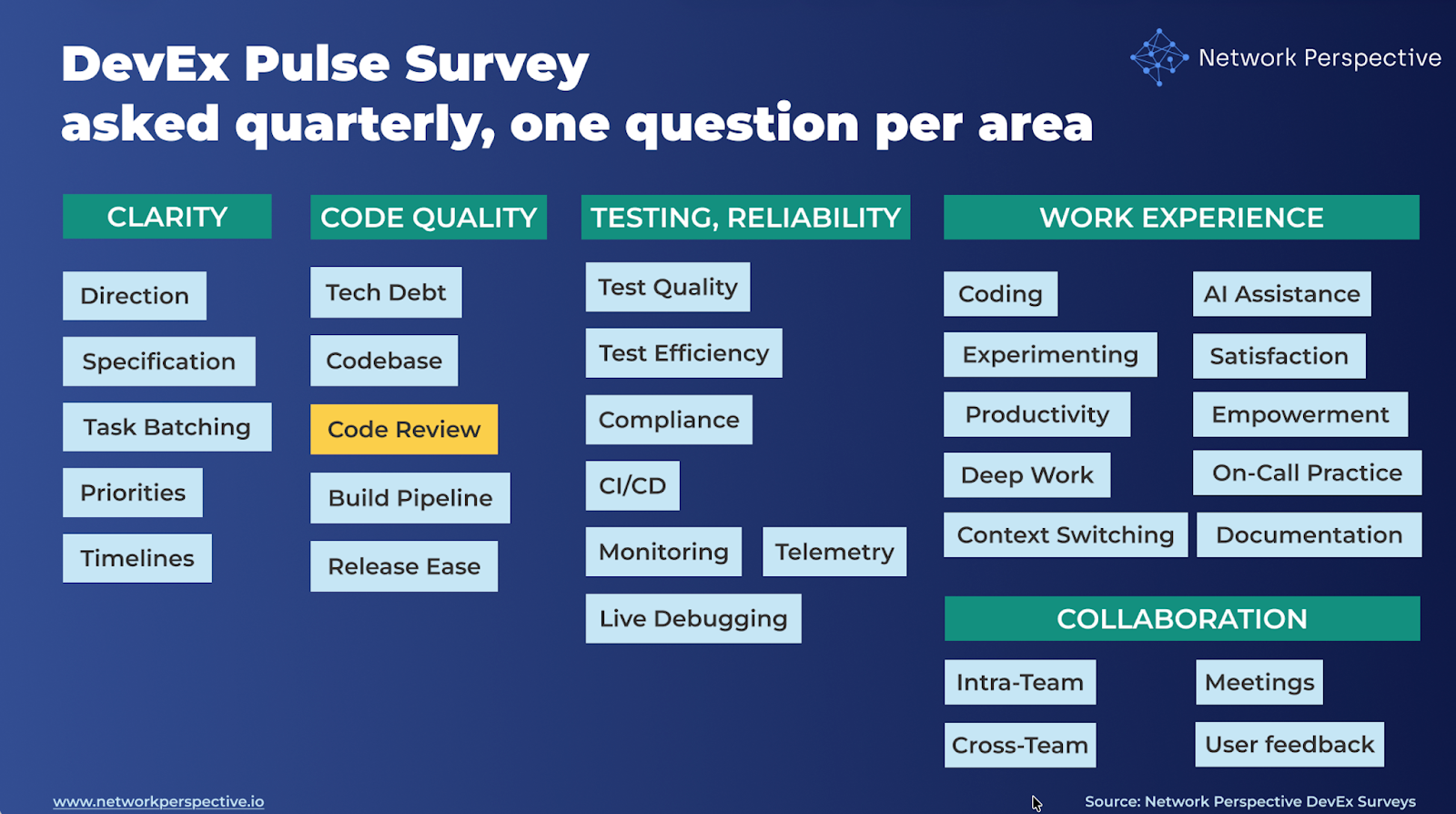

In our DevEx AI tool, we use two sets of survey questions: DevEx Pulse (one question per area to track overall delivery performance) and DevEx Deep Dive (a focused root-cause diagnostic when something needs attention).

DevEx Pulse tells us where friction is. DevEx Deep Dive tells us why it exists.

Let’s take a closer look at Code review. If the Pulse question “Code reviews are timely and provide valuable feedback” receives low scores and developers’ comments reveal significant friction and blockers, what should you do next?

Here are 15 deep dive questions you can ask your developers to uncover the causes of friction in code review, along with guidance on how to interpret the results, common patterns engineering teams encounter, and practical first steps for improvement. This will help you pinpoint what’s causing the problem and fix it on your own, or move faster with our DevEx AI tool and expert guidance.

The real question is: Do code reviews help changes move forward and improve quality — or do they mostly create waiting, rework, and stress?

Deep dive questions should help you map how code review flows through your delivery process and identify where it breaks down:

Responsiveness → Expectations → Feedback → Depth → Flow → Coverage → Safety → Cost

Here’s how the DevEx AI tool helps uncover this.

Do reviews happen fast enough?

Is it clear what reviewers look for?

Does feedback actually help?

Are the right things reviewed?

Do reviews keep work moving?

Are reviews shared across the team?

Do reviews feel safe and respectful?

Ideas to spot or reduce friction?

Do code reviews help changes move forward and improve quality — or do they mostly create waiting, rework, and stress? Here’s how the DevEx AI tool helps make sense of the results.

Questions

What this section tests

Whether reviews are fast and predictable, or a source of waiting and uncertainty.

How to read scores

Key insight

Slow or unpredictable reviews turn finished work back into waiting work.

Open-ended comments – how to read responses

Key insight

Waiting time is one of the biggest hidden costs in delivery.

Questions

What this section tests

Whether there is a shared understanding of what a good review looks like.

How to read scores

Key insight

Unclear review expectations cause rework and frustration.

Open-ended comments – how to read responses

Key insight

Reviews work best when everyone knows the bar before review starts.

Questions

What this section tests

Whether reviews add value, not noise.

How to read scores

Key insight

Good reviews improve the work, not just comment on it.

Open-ended comments – how to read responses

Key insight

Too much low-value feedback slows work and hurts morale.

Questions

What this section tests

Whether reviews focus on the right level of problems.

How to read scores

Key insight

Reviews should catch real problems early, not just polish code.

Open-ended comments – how to read responses

Key insight

Shallow reviews push problems downstream.

Questions

What this section tests

Whether reviews move work forward smoothly.

How to read scores

Key insight

Clear next steps matter as much as fast feedback.

Open-ended comments – how to read responses

Key insight

Review churn is a sign of unclear standards or timing.

Questions

What this section tests

Whether reviewing is shared and reliable, not fragile.

How to read scores

Key insight

Reviews that depend on a few people will always slow down.

Open-ended comments – how to read responses

Key insight

Review capacity is a system design choice.

Questions

What this section tests

Whether reviews are psychologically safe, not stressful.

How to read scores

Key insight

Unsafe reviews reduce learning and slow improvement.

Open-ended comments – how to read responses

Key insight

Safety determines whether reviews improve code or just approve it.

Question

Weekly – Time spent waiting for reviews, responding to comments, and updating code after feedback

Key insight

Time spent in review is the clearest signal of review health.

Pattern:

Speed ↓ + Effort ↑

Interpretation

Reviews act as a bottleneck.

Pattern:

Feedback ↓ + Depth ↓

Interpretation

Time is spent on small things instead of real issues.

Pattern:

What to check ↓ + Flow ↓

Interpretation

Teams don’t agree on what “good” looks like.

Pattern:

Coverage ↓ + Speed ↓

Interpretation

Availability controls delivery.

Reviews are fast, but rework is high.

Feedback helps, but standards aren’t shared.

Reviews matter, but capacity is under-sized.

Polite tone, but power imbalance remains.

Contradictions show where the system looks healthy but still hurts.

What NOT to say

What TO say (use this framing)

“This shows how our review system helps or hurts delivery.”

“The issue isn’t individuals — it’s review timing, clarity, and capacity.”

Show three things only:

Here’s how the DevEx AI tool will guide you toward making first actions.

Goal: Reduce waiting and make review timing predictable.

First steps

Goal: Align expectations before review starts.

First steps

Goal: Increase signal, reduce noise.

First steps

Goal: Ensure reviews catch meaningful issues early.

First steps

Goal: Reduce back-and-forth cycles.

First steps

Goal: Remove reviewer bottlenecks.

First steps

Goal: Maintain psychological safety.

First steps

Goal: Reduce weekly time lost in reviews.

First steps

Speed ↓ + Effort ↑

First steps

Feedback ↓ + Depth ↓

First steps

What to check ↓ + Flow ↓

First steps

Coverage ↓ + Speed ↓

First steps

Reviews are fast, but rework is high.

First steps

Feedback helps but there is too much back-and-forth.

First steps

Reviews matter but capacity is insufficient.

First steps

Tone is polite but disagreement is uncomfortable.

First steps

Improve clarity and readiness before the review starts.

Most review friction comes from unclear expectations or unfinished work entering review.

When:

reviews naturally become faster, deeper, and less stressful.

Introduce a simple PR readiness and review checklist. Example:

Before opening a PR

During review

This single step usually improves:

at the same time.

What you’ve seen here is only a small part of what the DevEx AI platform can do to improve delivery speed, quality, and ease.

If your organization struggles with fragmented metrics, unclear signals across teams, or the frustrating feeling of seeing problems without knowing what to fix, DevEx AI may be exactly what you need. Many engineering organizations operate with disconnected dashboards, conflicting interpretations of performance, and weak feedback loops — which leads to effort spent in the wrong places while real bottlenecks remain untouched.

DevEx AI brings these scattered signals into one coherent view of delivery. It focuses on the inputs that shape performance — how teams work, where friction accumulates, and what slows or accelerates progress — and translates them into clear priorities for action. You gain comparable insights across teams and tech stacks, root-cause visibility grounded in real developer experience, and guidance on where improvement efforts will have the highest impact.

At its core, DevEx AI combines targeted developer surveys with behavioral data to expose hidden friction in the delivery process. AI transforms developers’ free-text comments — often a goldmine of operational truth — into structured insights: recurring problems, root causes, and concrete actions tailored to your environment.

The platform detects patterns across teams, benchmarks results internally and against comparable organizations, and provides context-aware recommendations rather than generic best practices.

Progress on these input factors is tracked over time, enabling teams to verify that changes in ways of working are actually taking hold, while leaders maintain visibility without micromanagement. Expert guidance supports interpretation, prioritization, and the translation of insights into measurable improvements.

To understand whether these changes truly improve delivery outcomes, DevEx AI also measures DORA metrics — Deployment Frequency, Lead Time for Changes, Change Failure Rate, and Mean Time to Recovery — derived directly from repository and delivery data. These output indicators show how software performs in production and whether improvements to developer experience translate into faster, safer releases.

By combining input metrics (how work happens) with output metrics (what results are achieved), the platform creates a closed feedback loop that connects actions to outcomes, helping organizations learn what actually drives better delivery and where further improvement is needed.

Returning to our topic — code review — you can explore proven practices grounded in hundreds of interviews our team has conducted with engineering leaders, or take a look on Code Review Metrics – The Miro Way